Wget Html Links

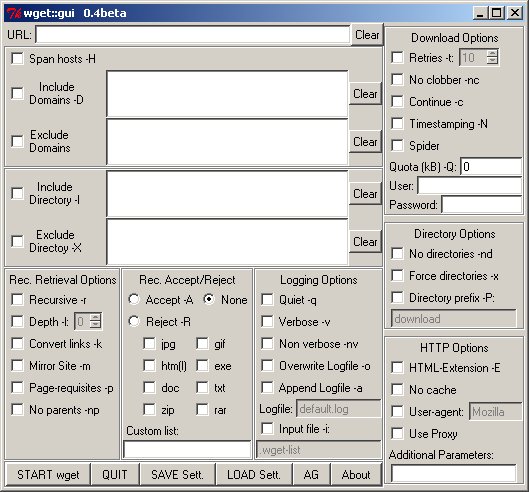

I would like to download a local copy of a web page and get all of the css, images, javascript, etc. In previous discussions (e.g. And, both of which are more than two years old), two suggestions are generally put forward: wget -p and. However, these suggestions both fail.

I would very much appreciate help with using either of these tools to accomplish the task; alternatives are also lovely. Option 1: wget -p wget -p successfully downloads all of the web page's prerequisites (css, images, js). However, when I load the local copy in a web browser, the page is unable to load the prerequisites because the paths to those prerequisites haven't been modified from the version on the web. For example: • In the page's html, will need to be corrected to point to the new relative path of foo.css • In the css file, background-image: url(/images/bar.png) will similarly need to be adjusted. Is there a way to modify wget -p so that the paths are correct?

How to get WGET to download exact same web page html as. But the.html file saved by WGET when using the same. Display a given HTML page.--convert-links after. I am trying to download the package from using wget, but what I am getting is the html file of the link I. Warcraft 3 1.06 Patch.

Free Heatwave Central Heating Rapidshare Programs there. Option 2: httrack seems like a great tool for mirroring entire websites, but it's unclear to me how to use it to create a local copy of a single page. There is a great deal of discussion in the httrack forums about this topic (e.g. ) but no one seems to have a bullet-proof solution.

Option 3: another tool? Some people have suggested paid tools, but I just can't believe there isn't a free solution out there. Thanks so much! Wget is capable of doing what you are asking. Just try the following: wget -p -k The -p will get you all the required elements to view the site correctly (css, images, etc). The -k will change all links (to include those for CSS & images) to allow you to view the page offline as it appeared online.

From the Wget docs: ‘-k’ ‘--convert-links’ After the download is complete, convert the links in the document to make them suitable for local viewing. This affects not only the visible hyperlinks, but any part of the document that links to external content, such as embedded images, links to style sheets, hyperlinks to non-html content, etc. Each link will be changed in one of the two ways: The links to files that have been downloaded by Wget will be changed to refer to the file they point to as a relative link. Example: if the downloaded file /foo/doc.html links to /bar/img.gif, also downloaded, then the link in doc.html will be modified to point to ‘./bar/img.gif’. This kind of transformation works reliably for arbitrary combinations of directories. The links to files that have not been downloaded by Wget will be changed to include host name and absolute path of the location they point to. Example: if the downloaded file /foo/doc.html links to /bar/img.gif (or to./bar/img.gif), then the link in doc.html will be modified to point to Because of this, local browsing works reliably: if a linked file was downloaded, the link will refer to its local name; if it was not downloaded, the link will refer to its full Internet address rather than presenting a broken link.

The fact that the former links are converted to relative links ensures that you can move the downloaded hierarchy to another directory. Anima Beyond Fantasy Rpg Pdf. Note that only at the end of the download can Wget know which links have been downloaded.

Because of that, the work done by ‘-k’ will be performed at the end of all the downloads.

Using a web browser (IE or Chrome) I can save a web page (.html) with Ctl-S, inspect it with any text editor, and see data in a table format. One of those numbers I want to extract, but for many, many web pages, too many to do manually. So I'd like to use WGET to get those web pages one after another, and write another program to parse the.html and retrieve the number I want. But the.html file saved by WGET when using the same URL as the browser does not contain the data table. It is as if the server detects the request is coming from WGET and not from a web browser, and supplies a skeleton web page, lacking the data table. How can I get the exact same web page with WGET?

MORE INFO: An example of the URL I'm trying to fetch is: where the string ICENX is a mutual fund ticker symbol, which I will be changing to any of a number of different ticker symbols. This downloads a table of data when viewed in a browser, but the data table is missing if fetched with WGET.